C³: What AI Can't Do Alone

C³: What AI Can't Do Alone

80% of humanity has never used generative artificial intelligence proactively. 0.3% pays for a license.

That's the number I opened with at the talk Suricata Labs brought to the SNBX Innovation Summit 2026, because it's uncomfortable for those of us who work in innovation. We live in a bubble. We assume everyone shares the same urgency we feel — that if something has been available for two years, it must already be mainstream. And when you look at the numbers, that's not true.

The Summit, now in its fourth edition, brought together more than 450 people at the Hilton Hotel in San Salvador — entrepreneurs, investors, academics, and government officials — around a question with no easy answer: how do you build real innovation in the region? I was invited to speak about what AI can't do alone. And the answer I proposed has three components.

Friedman's Problem No Longer Applies

In 2005, Thomas Friedman published The World Is Flat and proposed a formula: CQ + PQ > IQ. Curiosity plus passion matter more than intelligence. His argument was that the internet flattened the world, information became accessible to everyone, and what differentiated you was no longer how smart you were but how curious you were.

He was right for his time. Friedman's problem was access: the information was there, but it took curiosity to find it.

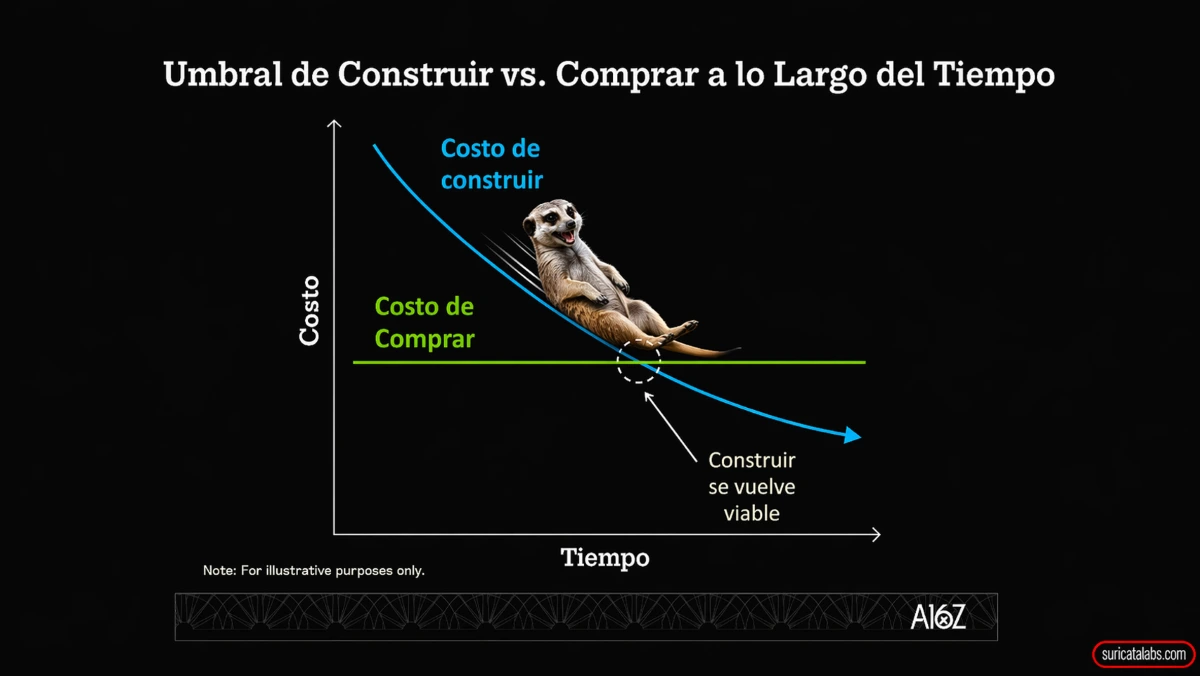

Today the problem isn't access. It's abundance. You don't lack information — you're drowning in it. You don't lack the capacity to generate — you have too much. In the AI era, ignorance stopped being a problem of access and became a problem of will. You're one prompt away from understanding how a financial model works, from exploring your industry's supply chain, from learning in a couple of hours what used to take weeks. The cost of asking has dropped to zero.

Curiosity is still essential. But it's not enough on its own.

The Trap of Unfiltered Creativity

When you let someone experiment without cost, interesting things happen. A few weeks ago my son asked me if we could build a computer game together. I don't know how to code. Three hours later we had something running on the screen and he was looking at me like I was a wizard.

That's the good side. AI didn't make us more creative. It removed our excuse for not being creative. The ideas were always there. What we lacked was the technical ability to execute them, or the willingness to invest hours in something that probably wouldn't work. AI lowered that cost.

But there's a trap that few people are watching. A study published in Science Advances evaluated hundreds of writers producing stories with and without AI. The AI-assisted stories were individually better — better written, more enjoyable. But they were significantly more similar to each other. Each person gained in quality; collectively, we lost diversity. And a second study found something more alarming: after seven days of using AI for creative tasks, when it was removed, participants' creativity didn't return to its baseline. It fell below it. They called it the creative scar. What you don't use, you lose.

Creativity isn't the output — it's not the image, the text, the prototype that AI generates. It's the capacity to connect what no one has connected before, to see that something can go further than what the machine proposes. And AI doesn't do that, because it doesn't know it should.

Looking Good Doesn't Mean Being Right

Anthropic analyzed nearly 10,000 conversations between humans and their language model and found something that should unsettle any leader: the more polished and complete an AI output looks, the less humans question it. When something seems finished, we turn off our critical filter. The likelihood that you'll verify the reasoning, identify what's missing, or ask whether what's in front of you is actually correct — all of it drops.

Judgment isn't distrust of the tool. It's what you bring from your accumulated experience: knowing what questions to ask, recognizing when an answer that seems complete ignores the specific context of your industry, your client, your market. AI doesn't know what you know. It doesn't know the conversations you had with your team last week, the contract that fell apart two years ago, or why that particular client always negotiates differently. All of that is judgment. And all of it comes from you.

Looking good doesn't mean being right. Knowing how to look at something polished and ask whether it's actually correct — or just fast — is what separates the professional who uses AI from the one who obeys it.

C³ = Curiosity × Judgment × Creativity

Those three components are the formula. I call it C³, and the key is that it's a multiplication, not a sum. When you add, one variable can compensate for the others. With multiplication, if any of the three is zero, the entire result is zero.

Curiosity without judgment is an infinite rabbit hole. Judgment without curiosity is paralysis: repeating the model that worked five years ago without seeing that the market has changed. And creativity without either is generating for the sake of generating, without knowing what's worth building.

Researchers from Harvard and BCG ran an experiment with 758 consultants using AI on real tasks. They identified two types: Centaurs, who deliberately divided tasks between what they thought through alone and what they co-created with the machine, and Cyborgs, who integrated AI into absolutely everything. The Centaurs were more accurate. The difference wasn't the tool. It was the process: knowing when to think alone and when to think with assistance. That's C³ in practice.

The Formula Works When You Activate It

C³ isn't a theoretical model. It's a description of what the people and organizations that actually advance with AI are doing. They have the curiosity to explore without waiting for someone to give them permission. They have the creativity to take output further than the tool suggests by default. And they have the judgment to know when a result is good and when it only seems good.

The problem isn't the technology or access to it. It's that all three capacities require deliberate practice, and most organizations aren't building any of them intentionally. They're adopting tools. That's not the same thing.

Days before the Summit I explored the same framework from a different angle: why creative industries are particularly well-positioned in the AI era — and what that means for those working in culture, design, and media.

If you're interested in exploring how to apply this framework in your team or organization, reach out.